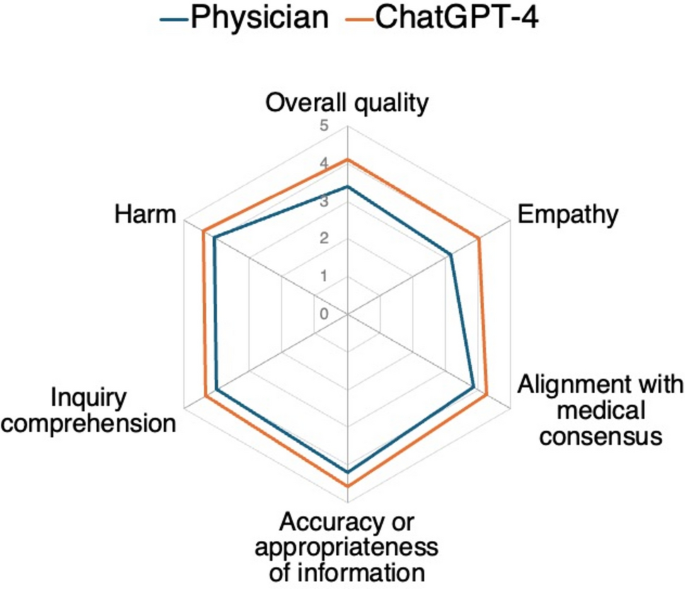

A comparative analysis of physician responses and those generated by an LLM-driven chatbot in real-world otorhinolaryngological scenarios was conducted using six criteria: overall quality, empathy, adherence to medical consensus, information appropriateness, accuracy of comprehension, and harmful content. In the context of our forum-based study using data from a single online platform, experts consistently rated the AI chatbot responses higher in quality, with the chatbot significantly outperforming physicians across all criteria. Point-biserial correlation analysis revealed that evaluators’ preference for ChatGPT-4’s responses (over physicians’) was moderately positively associated with stronger alignment with medical consensus, fewer informational inaccuracies, lower potential harm, better inquiry comprehension, and greater empathy. Ordinal logistic regression identified significant predictors associated with higher response quality. For physician responses on the forum, significant predictors of higher quality ratings were greater empathy, stronger alignment with medical consensus, higher information accuracy, better inquiry comprehension, lower potential for harm, and longer responses. ChatGPT-4 responses were similarly associated with significantly higher quality ratings when demonstrating greater empathy, stronger alignment with medical consensus, fewer inaccuracies, and lower potential harm.

The expert panel preferred chatbot-generated responses, favoring them in over 70% of cases. This preference remained consistent in terms of perceived accuracy of information and appropriateness of responses. However, a systematic review has raised concerns regarding the role of ChatGPT in healthcare, including outdated knowledge (limited to 2021), misinformation, and overly detailed responses16. While LLMs have exhibited high accuracy in clinical decision-making, particularly when using repeated clinical information to enhance reasoning17, their potential for inaccurate or incomplete advice remains a critical limitation16,18. Notably, ChatGPT-3 has outperformed physicians in diagnostic accuracy for common complaints19. Regardless of how rare these instances may be, healthcare cannot rely solely on AI tools, given their occasional inaccuracies. Consistent with our findings, Ayers et al. analyzed AI and physician responses in social media-based medical consultations and reported ChatGPT’s superiority in general medical inquiries5. In contrast, Bernstein et al. found no significant difference between AI- and ophthalmologist-generated responses regarding misinformation, potential harm, or adherence to medical standards4. These discrepancies suggest that AI’s applicability and evaluation criteria may vary by medical specialty, highlighting the need for further research on domain-specific AI performance. ChatGPT’s lack of transparency and unclear data sources pose significant challenges for personalized medicine, occasionally leading to surprisingly inaccurate medical decisions20,21.

Nevertheless, AI’s increasing accuracy in real-world clinical settings has played a critical role in reducing harm. LLM-powered chatbots have significantly improved handling open-ended inquiries of varying difficulty, suggesting their growing applicability with ongoing advancements in AI models21. ChatGPT’s transformer-based architecture and training objective emphasize fluency and coherence, making it particularly effective at simplifying complex medical terminology and enhancing the accessibility of medical information for laypersons; however, this same design can also lead to oversimplified answers that omit clinically important nuance, especially when the model is handling inputs near the limit of its context window. Despite these limitations, our findings further support the reliability of LLM technology in delivering accurate medical advice.

Can AI-driven chatbots accurately interpret medical contexts? The frequent use of specialized language and nuanced terminology in medical settings present significant challenges for general chatbots. However, a meta-analysis has shown that advanced LLMs designed for healthcare can effectively understand medical terminology, enhancing patient interaction and care6. Our results suggest that these AI models are capable of interpreting medical contexts and bridging communication barriers. Despite limited research on aligning AI chatbot responses with medical consensus, ChatGPT-3.5 and 4 have shown effectiveness comparable to established online medical resources and adherence to clinical guidelines in specific applications9,22. Additionally, patient complaints often involve a complex mix of physical, psychological, and social factors, making it challenging for physicians to understand them without misinterpretation fully. This study suggests that ChatGPT can effectively organize and interpret patient inquiries from multiple perspectives, demonstrating a robust understanding of medical contexts and eliminating the influence of human cognitive biases.

This study highlights that, within the specific context of the Reddit AskDocs forum, chatbot responses were perceived as more empathetic than those of physicians, even in complex medical scenarios, aligning with existing literature. Similarly, a study found that ChatGPT-generated responses on medical social platforms were rated higher for empathy than for human physicians5. A systematic review further supported using AI technologies to enhance empathy and relational behavior in healthcare23. However, empathy and contextual understanding derived from direct human interaction remain irreplaceable by ChatGPT24. This limitation could affect care quality, particularly for patients with suicidal tendencies or mental illnesses, where AI-assisted counseling is considered inappropriate25. By contrast, ChatGPT has been shown to facilitate empathetic communication between healthcare professionals and patients, even in non-English-speaking regions26. Online interactions also allow clinicians to support individuals lacking access to local healthcare27. Although human physicians are constrained by time and cannot consistently maintain empathy and politeness, AI overcomes these limitations. LLM technology can assist physicians by reducing the time spent on communication, allowing greater focus on medical practice. Therefore, AI and human clinicians can complement each other to enhance empathy and provide significant benefits.

Compared with physicians’ replies on the AskDocs forum, ChatGPT’s responses were rated by evaluators as adhering more closely to medical consensus, being more factually accurate, and maintaining a consistently empathetic tone. Our point-biserial correlation analysis indicated that these attributes—as well as more comprehensive and safer content—were moderately positively associated with higher quality ratings, whereas word count showed only a weak positive association. Moreover, physician and AI chatbot responses showed that high empathy contributed significantly to higher quality ratings, underscoring its importance in meeting patient needs. Notably, empathy emerged as a particular strength of ChatGPT-4’s answers. This finding may partly explain why ChatGPT-4’s responses were rated more favorably compared to those of physicians, even after accounting for accuracy, alignment with consensus, and potential harm in our analysis. These findings provide actionable insight that communication features exemplified by ChatGPT-4—particularly its consistent expression of empathy—can be strategically incorporated into physicians’ written replies to enhance their perceived quality. The results also suggest that physicians could improve adherence to medical community consensus to provide higher-quality responses. Deep-learning techniques enable AI to extract pertinent information from patient interactions and generate longer27, more detailed responses than physicians4,5. While detailed responses correlate with higher quality, excessively long replies may overwhelm patients. For LLM-driven chatbots, ensuring accuracy and appropriateness is unquestionably crucial. Prior studies found that LLM-generated and physician responses exhibited comparable inaccuracy or potential harm4. Our examples demonstrate that AI chatbots do not consistently provide accurate or appropriate responses. However, our findings and previous studies5 show that healthcare professionals consistently preferred LLM-generated responses over physicians’ responses. However, the explainability of AI systems, essential for ensuring accuracy and appropriateness, remains a persistent challenge in fostering trust among healthcare professionals for critical decision-making28.

Our results indicate that incorporating AI into online healthcare platforms may contribute to generating responses that are both more empathetic and more closely aligned with established medical guidelines—qualities sometimes lacking in physician responses or needing improvement. However, human oversight is essential to ensure these responses are accurate, appropriate, and aligned with medical standards. Recent findings have shown that as chatbots such as ChatGPT continuously learn from extensive datasets and refine their responses with updated medical knowledge, their potential for harm is perceived to be lower. However, based on our findings, ChatGPT cannot replace human physicians. To ensure accuracy and safety, healthcare professionals must verify AI-generated responses, as errors in medical information can have serious consequences. ChatGPT also cannot interpret body language and other nonverbal cues crucial in medical consultations. Additionally, it can produce “hallucinations”—plausible but incorrect information29—and biased training data could result in biased outputs, perpetuating existing biases30. Overreliance on ChatGPT can also reduce patient compliance and encourage self-diagnosis.

LLMs have the potential to revolutionize medical knowledge dissemination, providing efficient information retrieval in fast-paced clinical settings and enhancing healthcare decision-making. High-quality AI responses improve patient outcomes31, reduce unnecessary clinic visits, and make complex medical information more accessible32. Chatbots can enhance clinical workflows by assisting in triage, delivering preventive care information, and supporting chronic condition management, such as sinusitis or hearing loss. Therefore, a collaborative model, where experts review and correct AI-generated content or where ChatGPT refines a draft prepared by a physician, can be more effective. Future research should focus on refining chatbot capabilities to minimize risks and explore how they can be safely and effectively integrated into diverse healthcare settings, ensuring their reliable use in strengthening patient support and engagement.

While this study offers valuable insights, it is subject to several limitations. First, the data were sourced from a single English-language online forum, and physician responses were drawn exclusively from this platform. Therefore, our findings should be interpreted within the specific context of this online setting and not generalized to physician communication more broadly. Additionally, this study utilized an English-language medical consultation forum and does not account for medical consultations conducted in other languages or the distinctive characteristics of physician responses in different cultural contexts. Variations in medical communication arising from linguistic and cultural differences may influence the comparison between AI and human physicians. For instance, in languages such as Japanese, where subjects are often omitted, user inquiries tend to be more context-dependent, necessitating additional natural language processing for AI to generate appropriate responses. Second, the AI-generated responses were assessed in a controlled setting, which does not fully reflect their effectiveness in real-world clinical consultations. Potential bias may be due to ChatGPT-4’s tendency to repeat user questions and produce significantly longer responses than those of physicians. These features of ChatGPT-4 may be misinterpreted as empathetic communication. While excessive information sometimes hinders reader comprehension, the more extended responses generated by ChatGPT in this study were more likely to provide detailed and comprehensive information, which may have led evaluators to perceive them as higher in quality. Furthermore, the responses may have been influenced by training data that included physician input outside the dataset used in this study. The input prompt, which directed ChatGPT-4 to simulate an ENT specialist in a Reddit-like context, may have further biased its outputs by aligning them with the stylistic norms of online medical forums. Third, an additional limitation involves our detailed prompt engineering approach. General or vague prompts often produce substantial variability in LLM outputs, complicating consistent evaluation of accuracy and clinical utility for real-world patients33. Without structured prompts, inherent variability in LLM responses limits reproducibility and the meaningful assessment of chatbot value in patient consultations. Therefore, we intentionally utilized detailed prompts to achieve consistent and reproducible results. Fourth, this study did not investigate the impact of variations in initial input prompts, such as alternative role instructions or task framing, on the AI’s responses. These critical limitations, such as differences in prompts, can lead to variability in content, tone, and perceived empathy. The inconsistency in ChatGPT’s reproducibility under different prompts poses a significant challenge to its practical application in clinical settings. Fifth, the evaluations conducted by an expert panel of physicians may have introduced bias. The subjective nature of empathy assessments might not accurately represent the experiences of typical patients or the range of patient interactions. Specifically, the evaluators’ expertise and prior expectations regarding AI-generated content could have unconsciously influenced their judgments. Sixth, our study relied solely on physician evaluators to assess empathy and thus excluded direct patient feedback, which may capture different aspects of empathetic communication. Finally, the study did not address the long-term outcomes or safety of AI-driven advice, leaving such recommendations’ clinical reliability and efficacy unresolved.

Our cross-sectional study, which examined physician–patient interactions on a single online forum, demonstrates the considerable potential of LLM-driven chatbots to deliver high-quality, tailored answers to complex medical inquiries within text-based online consultations. Within this specific setting, this study highlights ChatGPT’s strengths in aligning with prevailing medical consensus, providing accurate information, and consistently exhibiting empathy in its responses. Physicians can leverage these strengths to improve their healthcare consultations. By integrating AI insights, physicians may better address complex, nuanced inquiries, produce responses that align with medical consensus, and exhibit empathy. Moreover, AI-driven chatbots could offer immediate, accurate guidance to patients and caregivers, addressing concerns and guiding the following steps, making them valuable in clinical settings like triage and telemedicine. To fully realize this potential, future efforts should improve AI transparency and explainability and ensure rigorous monitoring of AI-generated responses to maintain accuracy, appropriateness, and adherence to medical standards. Incorporating patient feedback and conducting long-term follow-ups are essential to enhance the reliability and acceptance of AI in online, text-based medical consultations.

link